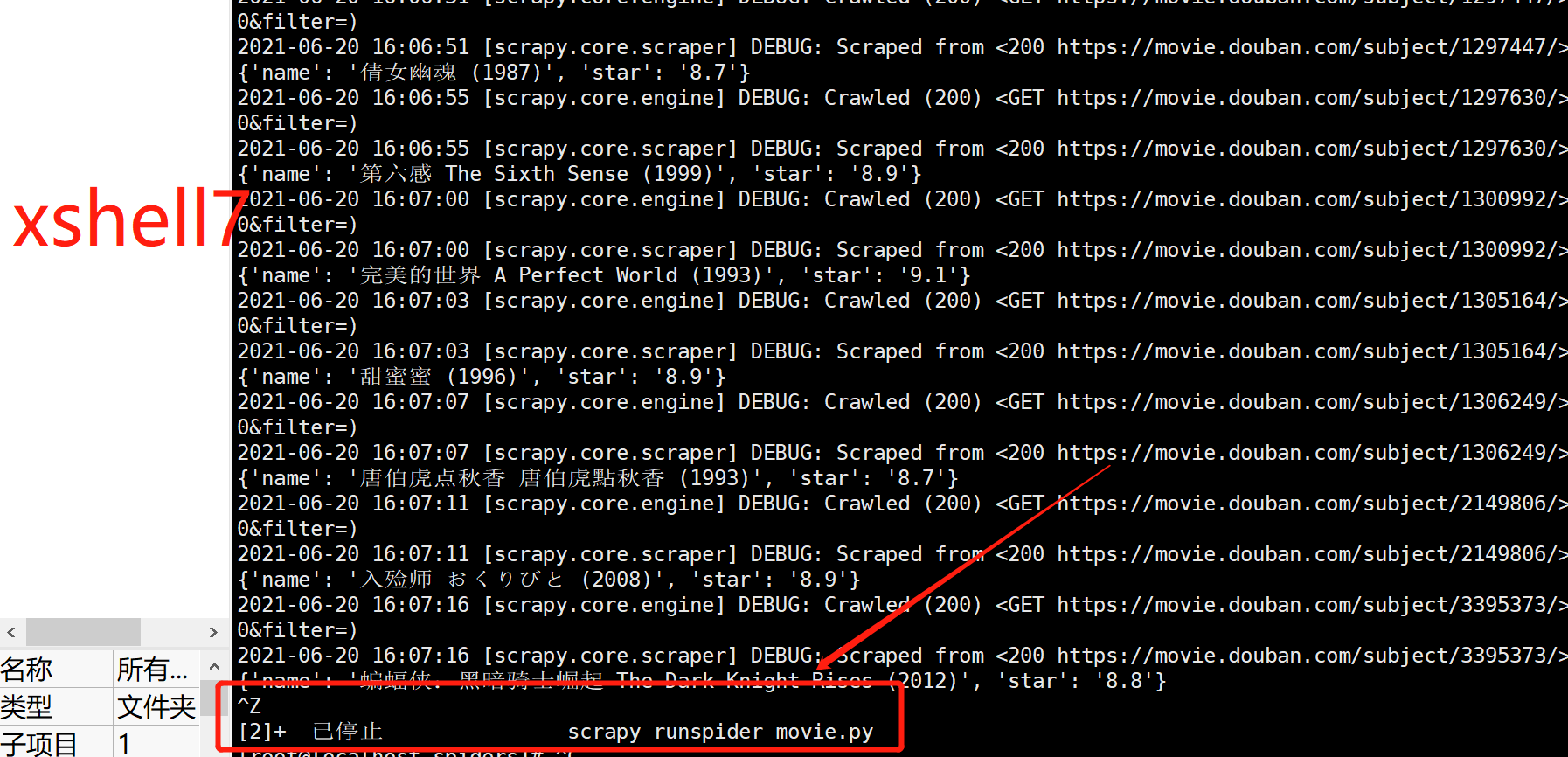

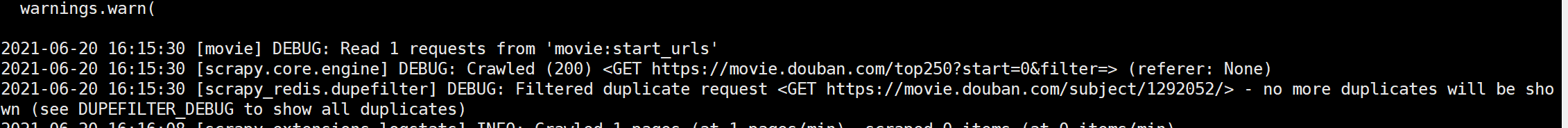

老师,你好!请问一下在xshell里面怎么实现爬虫程序暂停/继续功能? 我按下CTRL+z 停止程序后,不懂怎么恢复继续了

当我重新输入 scrapy runspider xxx.py ,提示被过滤掉了

2024-05-20 20:19:12 [scrapy.utils.log] INFO: Scrapy 2.6.1 started (bot: scrapy05)

2024-05-20 20:19:12 [scrapy.utils.log] INFO: Versions: lxml 5.2.2.0, libxml2 2.11.7, cssselect 1.1.0, parsel 1.6.0, w3lib 2.1.2, Twisted 22.4.0, Python 3.11.3 (tags/v3.11.3:f3909b8, Apr 4 2023, 23:49:59) [MSC v.1934 64 bit (AMD64)], pyOpenSSL 22.0.0 (OpenSSL 1.1.1n 15 Mar 2022), cryptography 36.0.2, Platform Windows-10-10.0.22621-SP0

2024-05-20 20:19:12 [scrapy.crawler] INFO: Overridden settings:

{'BOT_NAME': 'scrapy05',

'FEED_EXPORT_ENCODING': 'utf-8',

'NEWSPIDER_MODULE': 'scrapy05.spiders',

'SPIDER_MODULES': ['scrapy05.spiders'],

'TWISTED_REACTOR': 'twisted.internet.asyncioreactor.AsyncioSelectorReactor',

'USER_AGENT': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 '

'(KHTML, like Gecko) Chrome/125.0.0.0 Safari/537.36'}

2024-05-20 20:19:12 [asyncio] DEBUG: Using selector: SelectSelector

2024-05-20 20:19:12 [scrapy.utils.log] DEBUG: Using reactor: twisted.internet.asyncioreactor.AsyncioSelectorReactor

2024-05-20 20:19:12 [scrapy.utils.log] DEBUG: Using asyncio event loop: asyncio.windows_events._WindowsSelectorEventLoop

2024-05-20 20:19:12 [scrapy.extensions.telnet] INFO: Telnet Password: 00e3679f6ed09808

2024-05-20 20:19:12 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.logstats.LogStats']

2024-05-20 20:19:12 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2024-05-20 20:19:12 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2024-05-20 20:19:12 [scrapy.middleware] INFO: Enabled item pipelines:

['scrapy.pipelines.images.ImagesPipeline']

2024-05-20 20:19:12 [scrapy.core.engine] INFO: Spider opened

2024-05-20 20:19:12 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2024-05-20 20:19:12 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2024-05-20 20:19:13 [scrapy.core.engine] DEBUG: Crawled (200) <GET https://desk.zol.com.cn/bizhi/9812_118227_2.html> (referer: None)

--- Logging error ---

Traceback (most recent call last):

File "E:\Program Files\Python\Python311\Lib\logging\__init__.py", line 1110, in emit

msg = self.format(record)

^^^^^^^^^^^^^^^^^^^

File "E:\Program Files\Python\Python311\Lib\logging\__init__.py", line 953, in format

return fmt.format(record)

^^^^^^^^^^^^^^^^^^

File "E:\Program Files\Python\Python311\Lib\logging\__init__.py", line 695, in format

record.exc_text = self.formatException(record.exc_info)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "E:\Program Files\Python\Python311\Lib\logging\__init__.py", line 645, in formatException

traceback.print_exception(ei[0], ei[1], tb, None, sio)

File "E:\Program Files\Python\Python311\Lib\traceback.py", line 124, in print_exception

te = TracebackException(type(value), value, tb, limit=limit, compact=True)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "E:\Program Files\Python\Python311\Lib\traceback.py", line 690, in __init__

self.stack = StackSummary._extract_from_extended_frame_gen(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "E:\Program Files\Python\Python311\Lib\traceback.py", line 416, in _extract_from_extended_frame_gen

for f, (lineno, end_lineno, colno, end_colno) in frame_gen:

File "E:\Program Files\Python\Python311\Lib\traceback.py", line 353, in _walk_tb_with_full_positions

positions = _get_code_position(tb.tb_frame.f_code, tb.tb_lasti)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "E:\Program Files\Python\Python311\Lib\traceback.py", line 366, in _get_code_position

positions_gen = code.co_positions()

^^^^^^^^^^^^^^^^^

AttributeError: '_Code' object has no attribute 'co_positions'

Call stack:

File "d:\code\python\spider_code\scrapy05\scrapy05\begin.py", line 3, in <module>

execute(['scrapy','crawl','zol'])

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\cmdline.py", line 145, in execute

_run_print_help(parser, _run_command, cmd, args, opts)

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\cmdline.py", line 100, in _run_print_help

func(*a, **kw)

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\cmdline.py", line 153, in _run_command

cmd.run(args, opts)

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\commands\crawl.py", line 27, in run

self.crawler_process.start()

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\crawler.py", line 346, in start

reactor.run(installSignalHandlers=False) # blocking call

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\asyncioreactor.py", line 255, in run

self._asyncioEventloop.run_forever()

File "E:\Program Files\Python\Python311\Lib\asyncio\base_events.py", line 607, in run_forever

self._run_once()

File "E:\Program Files\Python\Python311\Lib\asyncio\base_events.py", line 1922, in _run_once

handle._run()

File "E:\Program Files\Python\Python311\Lib\asyncio\events.py", line 80, in _run

self._context.run(self._callback, *self._args)

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\asyncioreactor.py", line 271, in _onTimer

self.runUntilCurrent()

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\base.py", line 991, in runUntilCurrent

call.func(*call.args, **call.kw)

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\task.py", line 680, in _tick

taskObj._oneWorkUnit()

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\task.py", line 526, in _oneWorkUnit

result = next(self._iterator)

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\utils\defer.py", line 86, in <genexpr>

work = (callable(elem, *args, **named) for elem in iterable)

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\core\scraper.py", line 207, in _process_spidermw_output

dfd.addBoth(self._itemproc_finished, output, response, spider)

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\defer.py", line 538, in addBoth

return self.addCallbacks(

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\defer.py", line 477, in addCallbacks

self._runCallbacks()

File "D:\python_env\spider2_env\Lib\site-packages\twisted\internet\defer.py", line 857, in _runCallbacks

current.result = callback( # type: ignore[misc]

File "D:\python_env\spider2_env\Lib\site-packages\scrapy\core\scraper.py", line 267, in _itemproc_finished

logger.log(*logformatter_adapter(logkws), extra={'spider': spider},

Message: 'Error processing %(item)s'

Arguments: {'item': {'image_urls': [['https://desk-fd.zol-img.com.cn/t_s960x600c5/g6/M00/0F/09/ChMkKWF4tn6IeIGwAD5r3l1JSDcAAU-5QFAFu4APmv2924.jpg']]}}

2024-05-20 20:19:13 [scrapy.core.engine] INFO: Closing spider (finished)

2024-05-20 20:19:13 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{'downloader/request_bytes': 316,

'downloader/request_count': 1,

'downloader/request_method_count/GET': 1,

'downloader/response_bytes': 9708,

'downloader/response_count': 1,

'downloader/response_status_count/200': 1,

'elapsed_time_seconds': 0.339944,

'finish_reason': 'finished',

'finish_time': datetime.datetime(2024, 5, 20, 12, 19, 13, 237143),

'httpcompression/response_bytes': 33746,

'httpcompression/response_count': 1,

'log_count/DEBUG': 4,

'log_count/ERROR': 1,

'log_count/INFO': 10,

'response_received_count': 1,

'scheduler/dequeued': 1,

'scheduler/dequeued/memory': 1,

'scheduler/enqueued': 1,

'scheduler/enqueued/memory': 1,

'start_time': datetime.datetime(2024, 5, 20, 12, 19, 12, 897199)}

2024-05-20 20:19:13 [scrapy.core.engine] INFO: Spider closed (finished)

老师麻烦看下哪里错了

Message: 'Error processing %(item)s'

Arguments: {'item': {'image_urls': [['https://desk-fd.zol-img.com.cn/t_s960x600c5/g6/M00/0F/09/ChMkKWF4tn6IeIGwAD5r3l1JSDcAAU-5QFAFu4APmv2924.jpg']]}}

我点开上面链接显示如下,显示禁止访问怎么办